AI Video File Moderation

Stream’s video moderation engine, powered by AWS Rekognition, provides real-time content analysis of video uploads through advanced AI processing. Unlike image moderation, video analysis occurs frame-by-frame asynchronously to ensure thorough content review without impacting application performance.

Key Features:

- Detect inappropriate content, violence, and explicit material

- Analyze video frames for policy violations

- Moderate video content at scale

- Receive frame-by-frame analysis with timestamps

- Get confidence scores for detected violations

Supported Categories

Our video moderation engine analyzes frames to detect:

- Explicit Content:

- Pornographic material

- Explicit sexual acts

- Non-Explicit Nudity:

- Exposed intimate body parts

- Intimate physical contact

- Swimwear/Underwear:

- People in revealing swimwear

- Underwear detection

- Violence & Visually Disturbing:

- Fighting and physical aggression

- Weapons

- Gore and blood

- Disturbing imagery

- Substances:

- Drug-related content and paraphernalia

- Tobacco products

- Alcoholic beverages

- Inappropriate Content:

- Rude gestures

- Offensive body language

- Gambling-related content

- Hate symbols and extremist imagery

Limitations

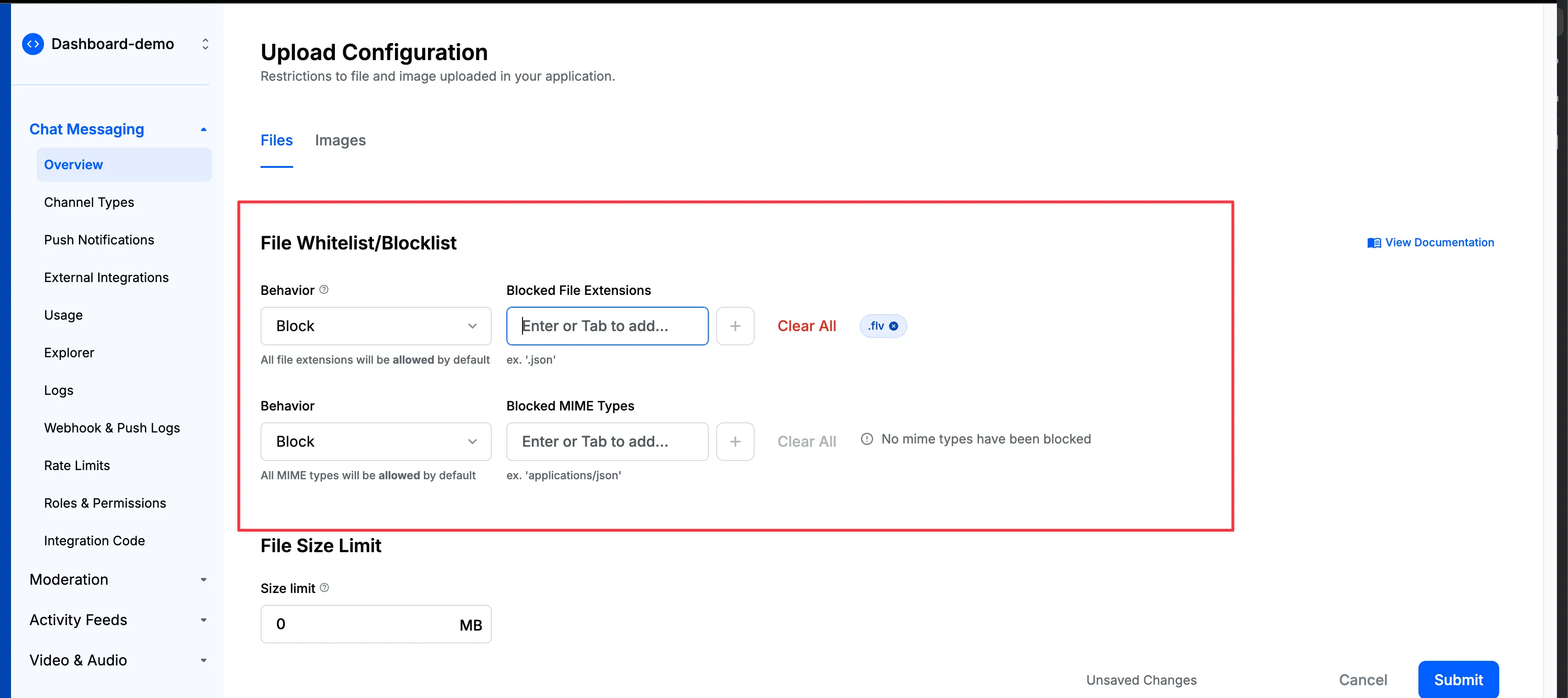

- We currently only support video moderation for

mp4andmovfiles. To avoid users uploading any other file types, you can configure File Upload Whitelist/Blocklists from dashboard

- Video Moderation works asynchronously, and thus video will be accepted immediately without any latency. But it will take few seconds or minutes to complete the moderation. Once the moderation is complete, the video will be either blocked or flagged depending on the configured rules.

Configuration

Make sure to go through the Creating A Policy section as a prerequisite.

Here’s how to set up video file moderation in your policy:

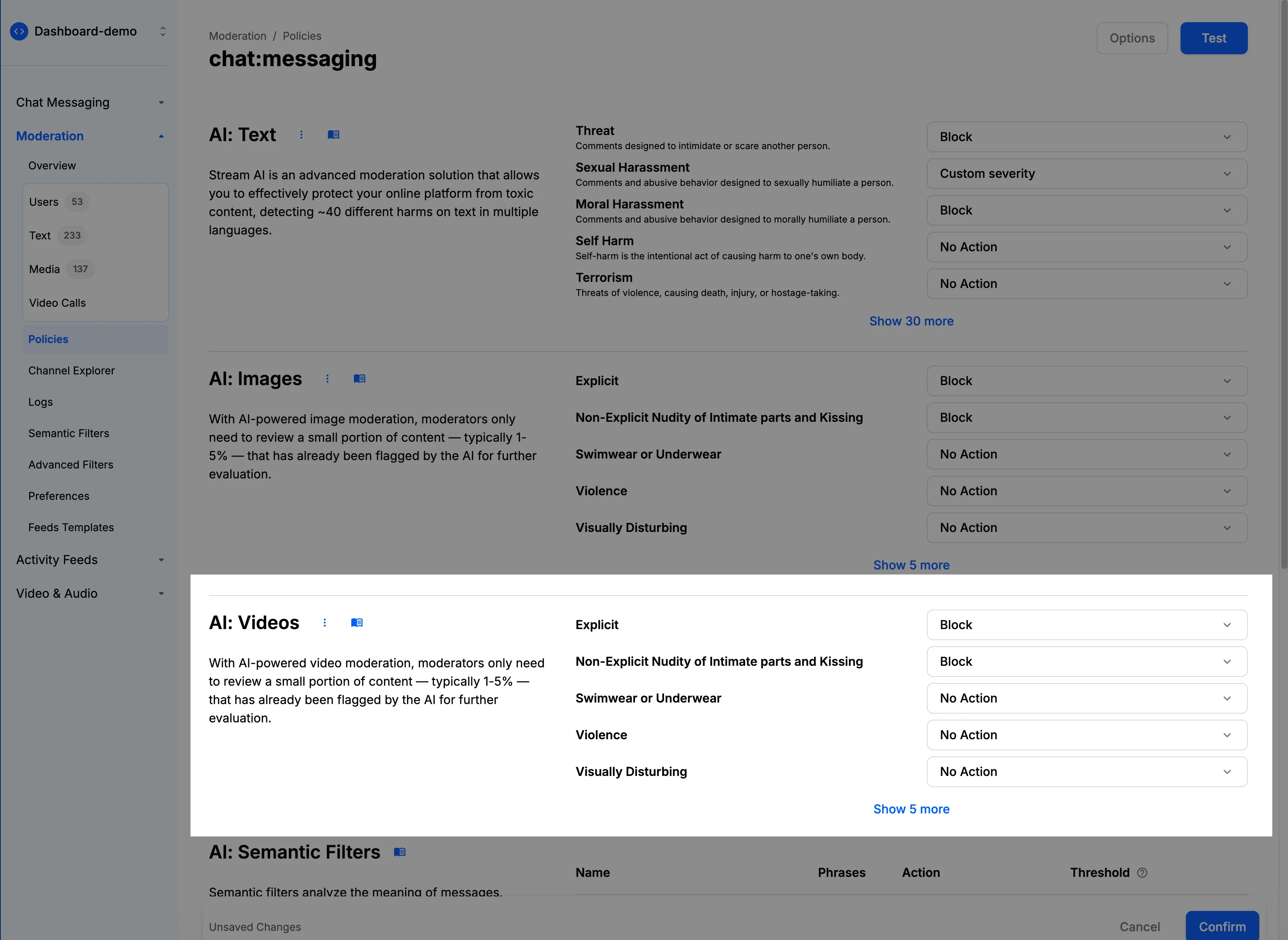

- Navigate to the “AI: Video” section in your moderation policy settings.

- You’ll find various categories of video content that can be moderated, such as nudity, violence, or hate symbols.

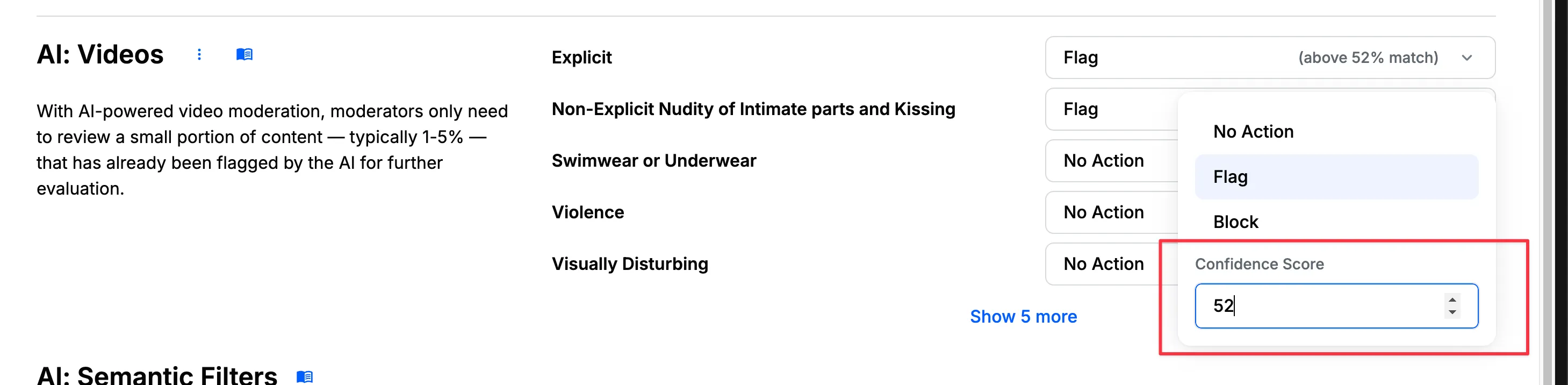

- For each category, choose the appropriate action: Flag, Block, or Shadow Block.

- You can also set a confidence threshold. This threshold represents the level of certainty at which the AI system considers its classification to be accurate. A higher threshold means the AI is more confident about its detection. You can adjust this threshold based on your moderation needs—a lower threshold will catch more potential violations but may increase false positives, while a higher threshold will be more selective but might miss some borderline cases.

Video moderation works asynchronously to avoid latency in your application. Thus, content will be sent successfully at first, but will be moderated in the background. If the video is found to be inappropriate, the content will be flagged or blocked.

How It Works

When a video is uploaded:

- The video is accepted immediately to maintain low latency.

- Analysis begins asynchronously in the background.

- AI models evaluate the video against all configured categories.

- If confidence thresholds are met, configured actions are applied.

- The message or post is updated based on moderation results.

- Flagged videos appear in the Media Queue for moderator review.

- Receive frame-by-frame analysis with timestamps.

Best Practices

For optimal video moderation:

- Configure confidence scores based on your tolerance for false positives

- Use the “Flag” action for borderline cases that need human review

- Use “Block” for clearly inappropriate content

- Monitor the Media Queue regularly to validate automated decisions

- Review frame-by-frame analysis with timestamps

- Adjust thresholds based on observed accuracy

- Consider your audience and community standards when configuring rules